|

|

使用 elasticsearch-head 面板连接 Elasticsearch(ES)发现如下错误:

查看状态信息,访问:超链接登录可见。

{

"cluster_name" : "elasticsearch",

"status" : "red",

"timed_out" : false,

"number_of_nodes" : 1,

"number_of_data_nodes" : 1,

"active_primary_shards" : 24,

"active_shards" : 24,

"relocating_shards" : 0,

"initializing_shards" : 0,

"unassigned_shards" : 7,

"delayed_unassigned_shards" : 0,

"number_of_pending_tasks" : 0,

"number_of_in_flight_fetch" : 0,

"task_max_waiting_in_queue_millis" : 0,

"active_shards_percent_as_number" : 77.41935483870968

}

head插件会以不同的颜色显示

1)、绿色——最健康的状态,代表所有的主分片和副本分片都可用;

2)、黄色——所有的主分片可用,但是部分副本分片不可用;

3)、红色——部分主分片不可用。(此时执行查询部分数据仍然可以查到,遇到这种情况,还是赶快解决比较好。)

查看索引状态,访问:超链接登录可见。

.monitoring-es-6-2021.01.22 0 p UNASSIGNED ALLOCATION_FAILED

一句话解释:未分配的分片。

启动ES的时候,通过Head插件不停刷新,你会发现集群分片会呈现紫色、灰色、最终绿色的状态。

为什么会出现 unassigned 分片?

如果不能分配分片,例如,您已经为集群中的节点数过分分配了副本分片的数量,则分片将保持UNASSIGNED状态。

其错误码为:ALLOCATION_FAILED。

unassigned 分片问题可能的原因?

1)INDEX_CREATED:由于创建索引的API导致未分配。

2)CLUSTER_RECOVERED :由于完全集群恢复导致未分配。

3)INDEX_REOPENED :由于打开open或关闭close一个索引导致未分配。

4)DANGLING_INDEX_IMPORTED :由于导入dangling索引的结果导致未分配。

5)NEW_INDEX_RESTORED :由于恢复到新索引导致未分配。

6)EXISTING_INDEX_RESTORED :由于恢复到已关闭的索引导致未分配。

7)REPLICA_ADDED:由于显式添加副本分片导致未分配。

8)ALLOCATION_FAILED :由于分片分配失败导致未分配。

9)NODE_LEFT :由于承载该分片的节点离开集群导致未分配。

10)REINITIALIZED :由于当分片从开始移动到初始化时导致未分配(例如,使用影子shadow副本分片)。

11)REROUTE_CANCELLED :作为显式取消重新路由命令的结果取消分配。

12)REALLOCATED_REPLICA :确定更好的副本位置被标定使用,导致现有的副本分配被取消,出现未分配。

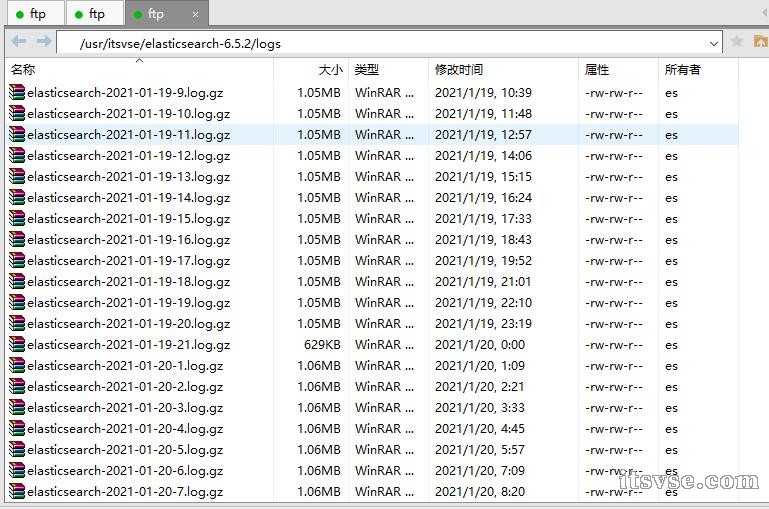

登录服务器查看 Elasticsearch(ES)日志,如下:

Elasticsearch 会输出很多日志,都放在 ES_HOME/logs 目录下。默认的日志记录等级是 INFO 。它提供了适度的信息,但是又设计好了不至于让你的日志太过庞大。

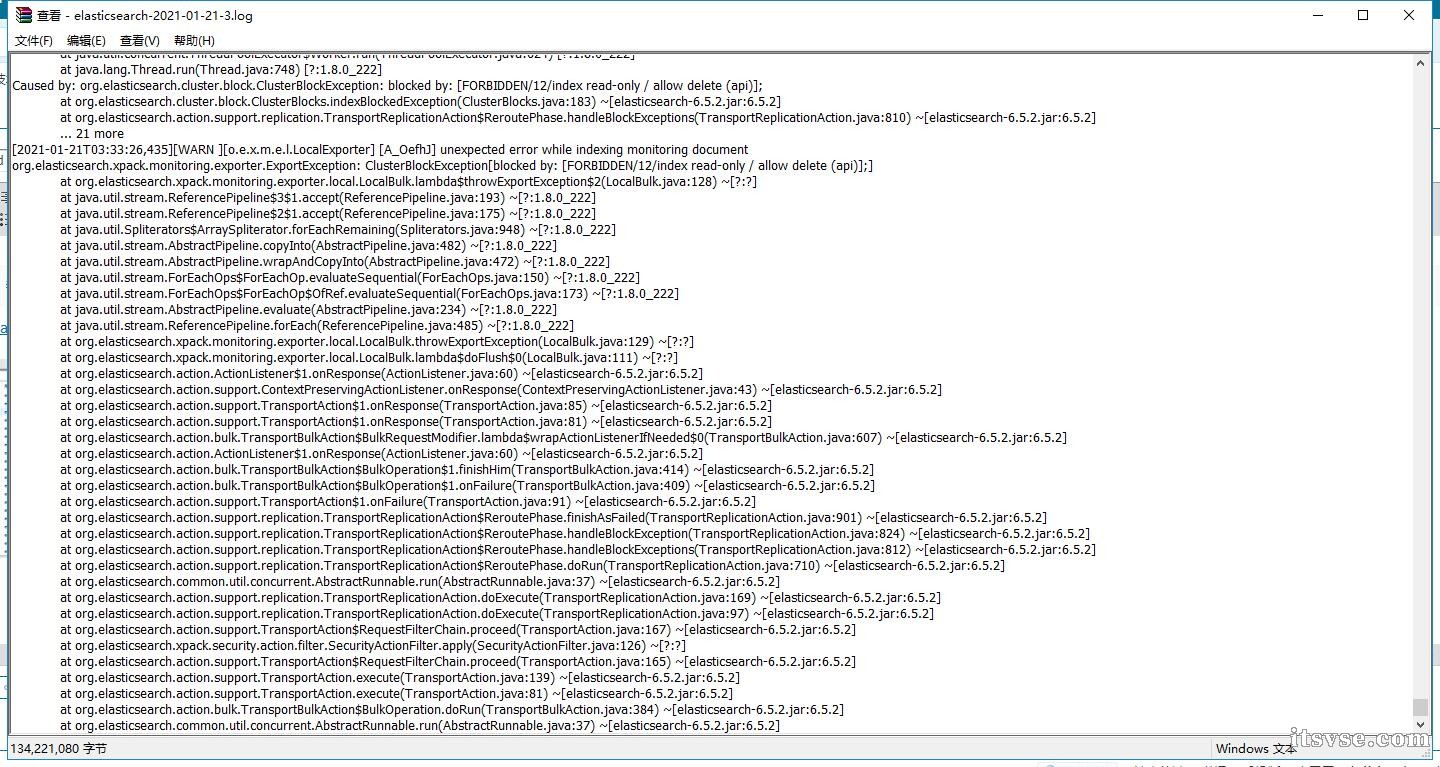

有大量的如下错误:

[2021-01-21T03:33:26,435][WARN ][o.e.x.m.e.l.LocalExporter] [A_OefhJ] unexpected error while indexing monitoring document

org.elasticsearch.xpack.monitoring.exporter.ExportException: ClusterBlockException[blocked by: [FORBIDDEN/12/index read-only / allow delete (api)];]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.lambda$throwExportException$2(LocalBulk.java:128) ~[?:?]

at java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:193) ~[?:1.8.0_222]

at java.util.stream.ReferencePipeline$2$1.accept(ReferencePipeline.java:175) ~[?:1.8.0_222]

at java.util.Spliterators$ArraySpliterator.forEachRemaining(Spliterators.java:948) ~[?:1.8.0_222]

at java.util.stream.AbstractPipeline.copyInto(AbstractPipeline.java:482) ~[?:1.8.0_222]

at java.util.stream.AbstractPipeline.wrapAndCopyInto(AbstractPipeline.java:472) ~[?:1.8.0_222]

at java.util.stream.ForEachOps$ForEachOp.evaluateSequential(ForEachOps.java:150) ~[?:1.8.0_222]

at java.util.stream.ForEachOps$ForEachOp$OfRef.evaluateSequential(ForEachOps.java:173) ~[?:1.8.0_222]

at java.util.stream.AbstractPipeline.evaluate(AbstractPipeline.java:234) ~[?:1.8.0_222]

at java.util.stream.ReferencePipeline.forEach(ReferencePipeline.java:485) ~[?:1.8.0_222]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.throwExportException(LocalBulk.java:129) ~[?:?]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.lambda$doFlush$0(LocalBulk.java:111) ~[?:?]

at org.elasticsearch.action.ActionListener$1.onResponse(ActionListener.java:60) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.ContextPreservingActionListener.onResponse(ContextPreservingActionListener.java:43) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction$1.onResponse(TransportAction.java:85) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction$1.onResponse(TransportAction.java:81) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkRequestModifier.lambda$wrapActionListenerIfNeeded$0(TransportBulkAction.java:607) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.ActionListener$1.onResponse(ActionListener.java:60) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation$1.finishHim(TransportBulkAction.java:414) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation$1.onFailure(TransportBulkAction.java:409) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction$1.onFailure(TransportAction.java:91) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.finishAsFailed(TransportReplicationAction.java:901) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockException(TransportReplicationAction.java:824) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockExceptions(TransportReplicationAction.java:812) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.doRun(TransportReplicationAction.java:710) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction.doExecute(TransportReplicationAction.java:169) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction.doExecute(TransportReplicationAction.java:97) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction$RequestFilterChain.proceed(TransportAction.java:167) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.xpack.security.action.filter.SecurityActionFilter.apply(SecurityActionFilter.java:126) ~[?:?]

at org.elasticsearch.action.support.TransportAction$RequestFilterChain.proceed(TransportAction.java:165) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction.execute(TransportAction.java:139) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.TransportAction.execute(TransportAction.java:81) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation.doRun(TransportBulkAction.java:384) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction.executeBulk(TransportBulkAction.java:496) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction.executeIngestAndBulk(TransportBulkAction.java:243) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction.doExecute(TransportBulkAction.java:169) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.bulk.TransportBulkAction.lambda$processBulkIndexIngestRequest$4(TransportBulkAction.java:549) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.ingest.IngestService$4.doRun(IngestService.java:417) [elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.common.util.concurrent.ThreadContext$ContextPreservingAbstractRunnable.doRun(ThreadContext.java:723) [elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) [elasticsearch-6.5.2.jar:6.5.2]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_222]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_222]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_222]

Caused by: org.elasticsearch.cluster.block.ClusterBlockException: blocked by: [FORBIDDEN/12/index read-only / allow delete (api)];

at org.elasticsearch.cluster.block.ClusterBlocks.indexBlockedException(ClusterBlocks.java:183) ~[elasticsearch-6.5.2.jar:6.5.2]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockExceptions(TransportReplicationAction.java:810) ~[elasticsearch-6.5.2.jar:6.5.2]

... 21 more

经过分析,发现是因为ES所在服务器磁盘空间太低引起,一旦在存储超过95%的磁盘中的节点上分配了一个或多个分片的任何索引,该索引将被强制进入只读模式。

查看所有索引设置信息,访问:超链接登录可见。,如下图:

"knowledge" : {

"settings" : {

"index" : {

"number_of_shards" : "3",

"blocks" : {

"read_only_allow_delete" : "true"

},

"provided_name" : "knowledge",

"creation_date" : "1576206251443",

"number_of_replicas" : "0",

"uuid" : "yeSIP_otQo6JQ8ooRdr8hA",

"version" : {

"created" : "6050299"

}

}

}

}

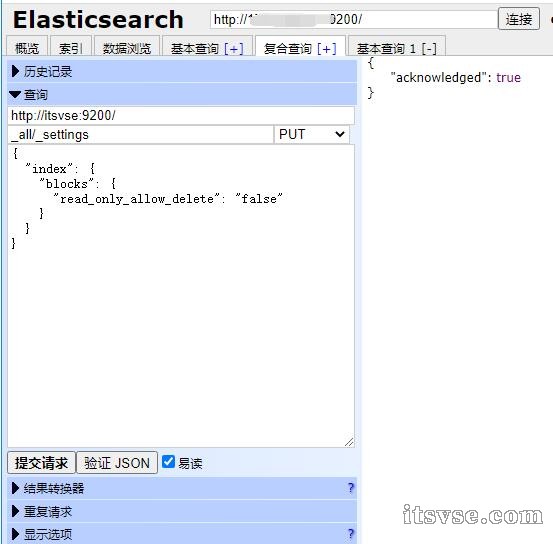

解决方案

在解决完磁盘问题,扩容或者删除垃圾文件,执行如下教程。

把read_only_allow_delete设置为false

获取使用 elasticsearch-head 发送 put 请求:超链接登录可见。,如下图:

这样还是无法去掉 red 的健康状态,直接删除“.monitoring-es-6-*”数据,状态就变成了健康,如下图:

(完)

|

上一篇:CentOS 统计每个文件夹大小,查找大文件下一篇:【实战】Elasticsearch(ES)集群健康值: yellow (6 of 7) 状态

|

|小黑屋|架构师

( 鲁ICP备14021824号-2 )|网站地图

|小黑屋|架构师

( 鲁ICP备14021824号-2 )|网站地图